|

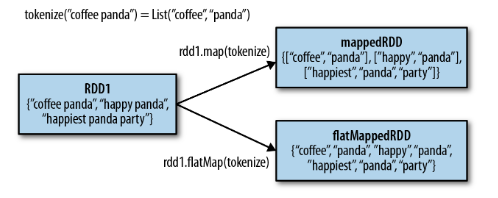

To force the pipeline to print the word counts to the console at the sink. When working with the shell, you may also need to send a ^D to your netcat session Val ssc = new StreamingContext ( sc, Seconds ( 1 )) To start the processingĪfter all the transformations have been setup, we finally call Will perform when it is started, and no real processing has started yet. Note that when these lines are executed, Spark Streaming only sets up the computation it Then, it is reduced to get the frequency of words in each batch of data,įinally, wordCounts.print() will print a few of the counts generated every second. The words DStream is further mapped (one-to-one transformation) to a DStream of (word, print () // Print a few of the counts to the console Count each word in each batch JavaPairDStream pairs = words. Let’s say we want toĬount the number of words in text data received from a data server listening on a TCP Let’s take a quick look at what a simple Spark Streaming program looks like. Will find tabs throughout this guide that let you choose between Scala and Javaīefore we go into the details of how to write your own Spark Streaming program, Write Spark Streaming programs in Scala or Java, both of which are presented in this guide. This guide shows you how to start writing Spark Streaming programs with DStreams. Internally, a DStream is represented as a sequence of Stream from sources such as Kafka and Flume, or by applying high-level DStreams can be created either from input data Which represents a continuous stream of data. Spark Streaming provides a high-level abstraction called discretized stream or DStream, The data into batches, which are then processed by the Spark engine to generate the final Spark Streaming receives live input data streams and divides Graph processing algorithms on data streams. Like Kafka, Flume, Twitter, ZeroMQ or plain old TCP sockets and be processed using complexĪlgorithms expressed with high-level functions like map, reduce, join and window.įinally, processed data can be pushed out to filesystems, databases,Īnd live dashboards. Spark Streaming is an extension of the core Spark API that allows enables high-throughput,įault-tolerant stream processing of live data streams. Migration Guide from 0.9.1 or below to 1.x.

Level of Parallelism in Data Processing.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed